DevOps at Edge Solutions Lab

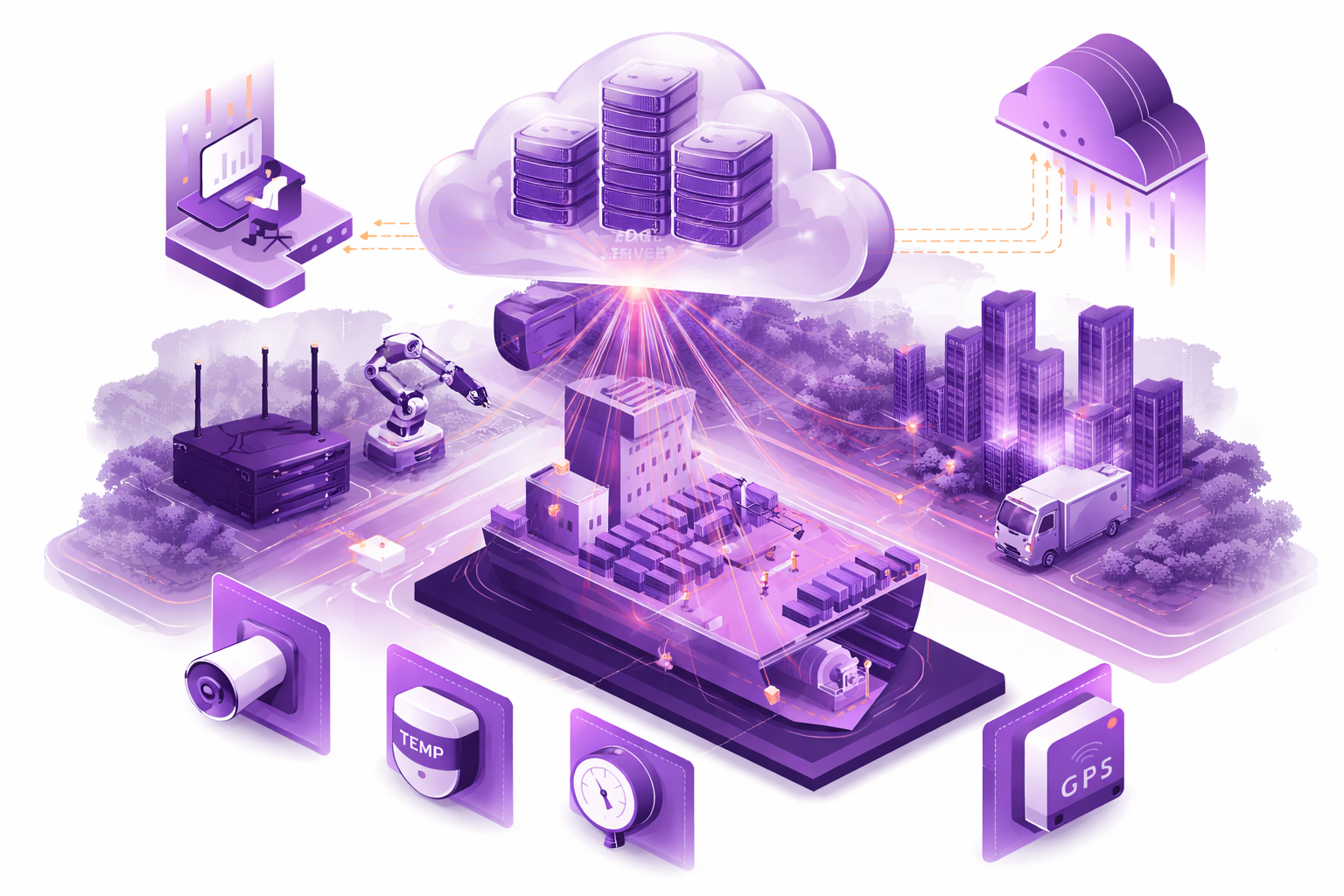

We specialize in automating the deployment, configuration, and scaling of edge environments using modern DevOps practices.

Our team has strong expertise in Infrastructure as Code (IaC), using Ansible and other — ensuring scalable, repeatable, and auditable infrastructure automation from edge to cloud.

In addition to Edge, we also support deployment of cloud-native and hybrid infrastructures, working across multiple cloud providers including AWS, Asure, Google Cloud, DigitalOcean, and others. We use Terraform, Bicep to provision and manage resources across multiple accounts, projects, and environments.

Our approach ensures that software is delivered consistently and securely — whether to a single node or a global fleet of distributed edge devices.

The Advantages of Environment Deployment & DevOps with Edge Solutions Lab

Technical Advantages

Automated CI/CD Pipelines.

Service Orchestration.

Infrastructure As Code (IaC).

End-to-End Deployment Expertise.

Scalable & Flexible Architectures.

Monitoring & Observability.

Resource Optimization.

Reliability & Security Benefits

Secure Infrastructure Deployment.

Compliance & Governance.

Disaster Recovery & High Availability.

Continuous Security Monitoring.

Business & Operational Advantages

Infrastructure Analysis & Cost Optimization.

Accelerated Time-to-Value.

Reduced Operational Overhead.

Elastic Scaling.

Lifecycle Management.

Integration with Edge, Cloud & Hybrid Systems.

Flexible Delivery Models.

Ready to implement Environment Deployment & DevOps in your project?

How it’s made?

Orchestration Analysys and Validation

We use lightweight Kubernetes distributions such as K3s, MicroK8s, or custom-optimized container runtimes depending on hardware constraints. For larger systems, we also support full Kubernetes clusters or hybrid orchestration models (cloud-edge coordination). Additionally, our team has deep expertise with standard Kubernetes deployments as well as HashiCorp Nomad — enabling us to tailor the orchestration layer to your specific performance, scalability, and architectural requirements.

- Service discovery, pod orchestration, and resource monitoring

are fully automated - We integrate Helm charts, GitOps tools (e.g., ArgoCD), and Kubernetes secrets for secure and version-controlled deployments

- Environments are designed to support offline resiliency and graceful rollback during intermittent connectivity

We tailor the orchestration stack to match the specific use case: real-time inference, data collection, control systems, or IoT event routing.

CI/CD Automation

We build robust CI/CD pipelines that support continuous delivery to both cloud and edge targets. This includes:

- Automated builds and image packaging

(Docker, Buildroot, Yocto) - Cross-compilation and testing for edge hardware targets

- Staging environments

for QA and pre-deployment simulation - Over-the-air (OTA) update pipelines

with version control and rollback - Triggering deployments via GitHub Actions, GitLab CI, Jenkins,

or customer-preferred platforms - GitOps-based deployment automation using tools like ArgoCD and Flux

— enabling declarative, version-controlled, and auditable updates across distributed edge fleets

CI/CD ensures that firmware, drivers, and app layers can be updated securely and without downtime.

Connectivity & Access Control

We establish secure and intelligent networking between cloud and edge environments:

- Encrypted connections (TLS, VPN such as WireGuard or others) with fallback mechanisms

- Remote provisioning using onboarding tokens or signed device certificates

- Edge gateways and NAT traversal for remote support and telemetry

- Role-based access control (RBAC) and principles of least privilege to protect connectivity data and operational commands

- Integration with secrets managers (Vault, AWS Secrets Manager) for storing credentials, keys, and tokens securely

Only authorized services and operators can access edge nodes or push configuration changes — all interactions are logged and auditable.

Ready to explore how to implement Environment Deployment & DevOps in your project?

Is Environment Deployment & DevOps the Right Move for Your Project?

Define Your Deployment Requirements

Identify the environments where your solution must operate — edge devices, private clouds, public clouds, or hybrid setups. Consider performance needs, latency requirements, security policies, compliance standards, and integration with existing infrastructure.

Evaluate Existing Tools & Pipelines

Check whether off-the-shelf CI/CD platforms, monitoring tools, and orchestration frameworks can meet your requirements. If compromises in automation, reliability, or security are too significant, a customized DevOps approach may be the better choice.

Analyze Cost, Complexity & Lifecycle

Estimate operational costs, maintenance effort, and lifecycle needs. Well-planned DevOps practices reduce downtime, lower infrastructure expenses, and extend the usability of your platforms. Custom pipelines and infrastructure-as-code become especially valuable in long-term, large-scale projects.

Plan for Scalability & Flexibility

Think ahead to future growth — will your application need to scale across multiple regions, handle higher data loads, or adapt to new platforms and services? Designing scalable deployment pipelines early prevents expensive re-engineering later.

Engage with a DevOps & Deployment Expert

The Edge Solutions Lab team supports you with infrastructure design, CI/CD setup, monitoring, and automation — ensuring your applications are production-ready, resilient, and optimized for continuous improvement.

Let’s find out if Edge is the right fit — and what it could mean for your future

The sooner you evaluate your Edge readiness, the faster you can unlock faster response times, smarter automation, and scalable digital operations.

Frequently Asked Questions

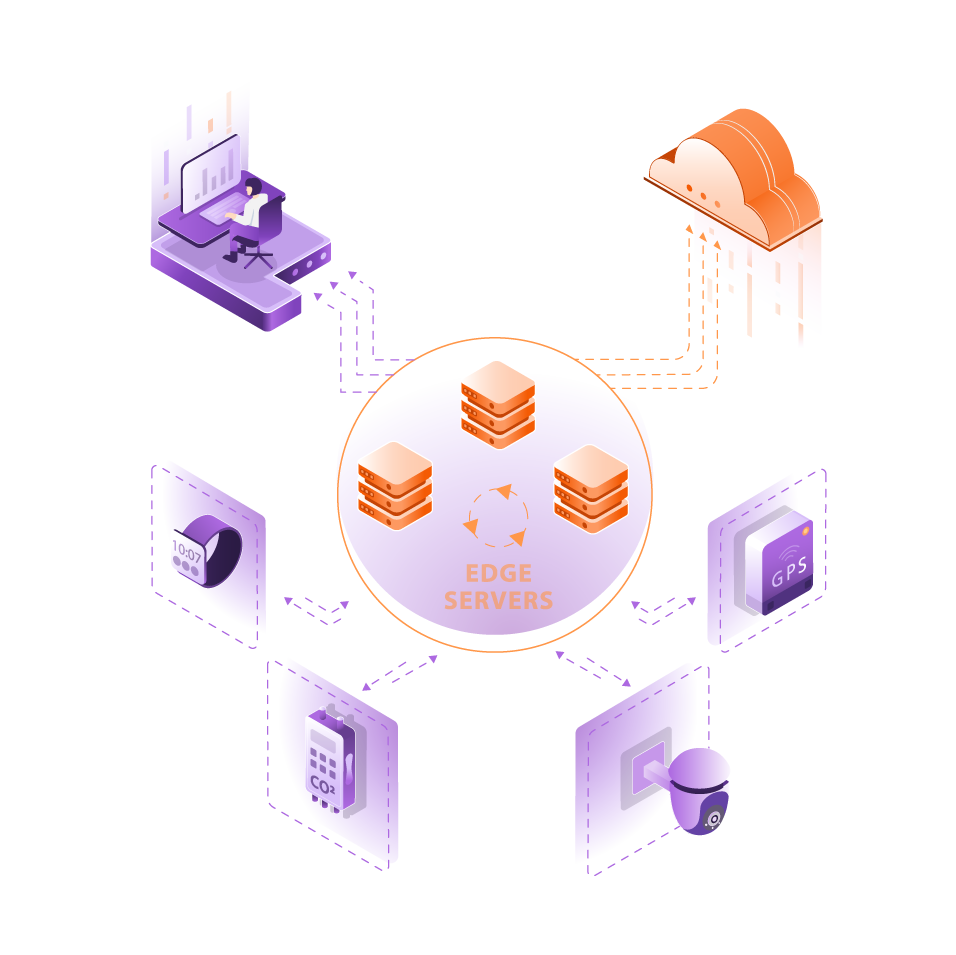

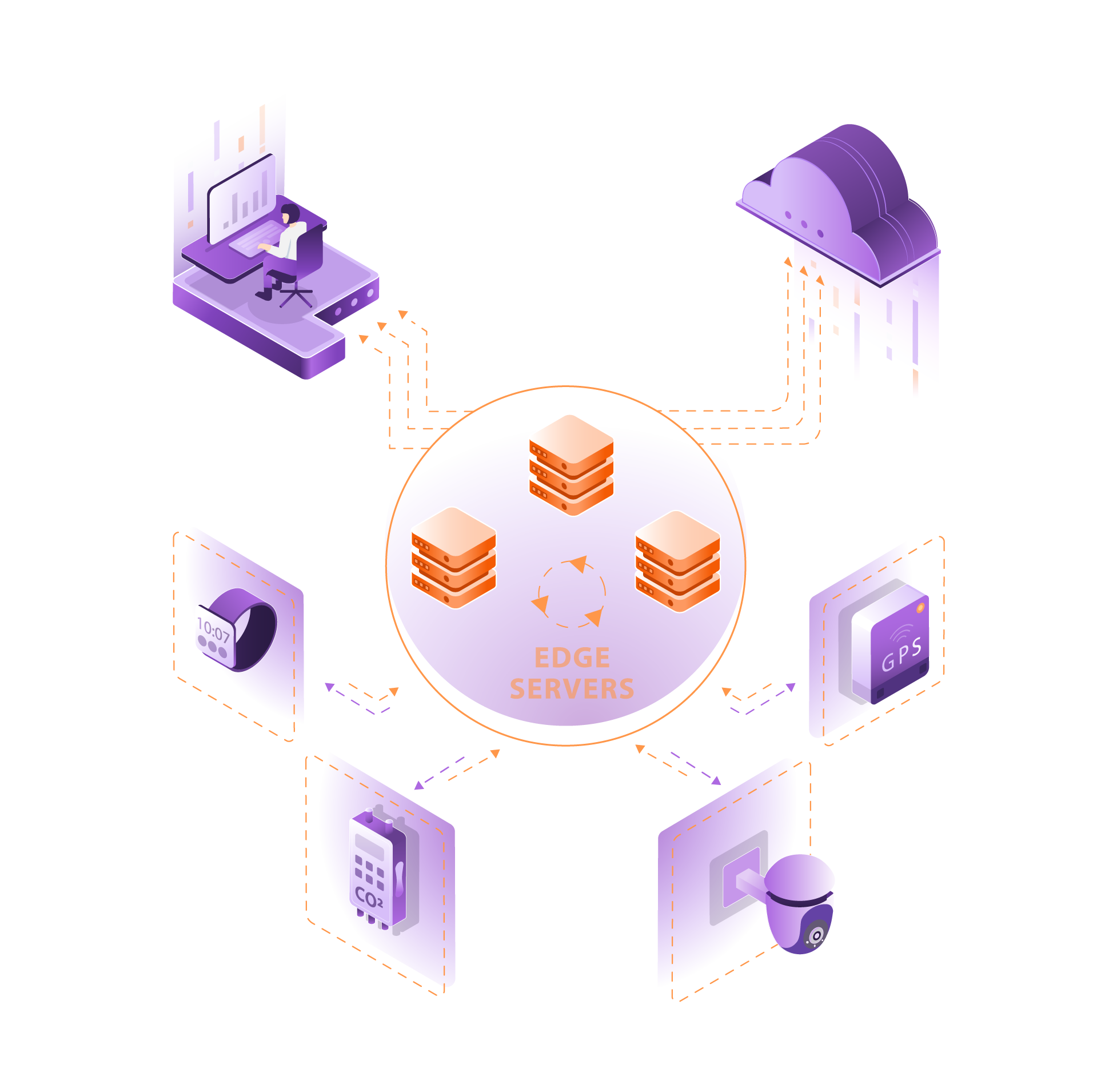

What is Edge Computing and How Does it Work?

Edge computing refers to processing data near the source of data generation rather than relying on a centralized data center. This approach reduces latency, enhances application performance, and improves the efficiency of computing resources by utilizing distributed edge nodes. By deploying computing resources at the network edge, organizations can achieve faster data processing and real-time analytics.

How Does DevOps Integrate with Edge Computing?

Integrating DevOps into edge computing involves applying DevOps principles to streamline the development and deployment of edge applications. This integration enhances collaboration among DevOps teams, allowing for continuous integration and continuous deployment (CI/CD) processes that are essential for managing edge computing environments effectively. By incorporating DevOps practices, organizations can improve the delivery speed of edge applications and ensure high-quality performance.

What Are the Benefits of Edge Deployment?

Edge deployment offers numerous benefits, including reduced latency, improved application performance, and better use of bandwidth. By processing data closer to the source, organizations can minimize the time taken for data transmission to cloud resources. Additionally, edge computing enables better handling of critical data, enhancing the capabilities of edge devices and supporting applications that require real-time processing.

What Are Common Use Cases for Edge Computing?

Use cases for edge computing span various industries and applications, such as smart cities, autonomous vehicles, IoT devices, and healthcare monitoring systems. These applications benefit from edge computing due to the need for immediate data processing and low latency. By deploying edge applications, organizations can enhance operational efficiency and enable innovative solutions in distributed systems.

What Challenges Are Associated with Edge Computing?

Challenges in edge computing include managing distributed systems, ensuring security across edge networks, and maintaining consistent application performance. Organizations must address the complexities of deploying applications across various edge devices and environments while ensuring that data integrity and security are upheld. Additionally, the distributed nature of edge computing requires robust management strategies to avoid operational bottlenecks.

How Can Organizations Manage Edge Computing Environments?

Managing edge computing environments involves implementing effective monitoring and management solutions to oversee distributed edge nodes. Organizations can utilize DevOps solutions to automate deployment processes, monitor application performance, and ensure that edge computing architectures function seamlessly. By integrating edge computing into DevOps practices, teams can streamline operations and enhance responsiveness to changing conditions.

What Role Does DevOps Play in Edge Application Development?

DevOps plays a crucial role in edge application development by fostering collaboration between development and operations teams. This convergence of DevOps practices ensures that edge applications are developed swiftly while maintaining high-quality standards. By implementing DevOps technologies, organizations can accelerate the deployment of edge applications and adapt to the evolving demands of edge computing environments.

What is the Future of Edge Computing?

The future of edge computing looks promising as more organizations recognize its potential to transform application development and deployment. With advancements in AI and machine learning, edge computing is set to enhance data processing capabilities and enable smarter decision-making at the edge of the network. As the demand for real-time data analysis grows, edge computing will play a pivotal role in shaping the next generation of technology solutions.