What Is Edge Computing? How It Works with Cloud Computing?

Edge computing is becoming one of the key technologies shaping modern digital systems, cloud platforms, and smart infrastructure.

Today, businesses generate more data than ever before — and they expect answers immediately. Traditional cloud models, where everything is sent to centralized data centers, can’t efficiently handle all workloads anymore.

Edge computing addresses this by moving computation closer to where data is generated. The reason edge computing has become so important is a mix of technological progress and economics.

First, hardware has improved dramatically. Small and compact devices — such as industrial gateways or single-board computers — can now deliver performance levels once reserved for large cloud data centers. Modern edge devices often include multi-core processors, powerful GPUs, and large amounts of memory. That means they can handle complex workloads directly at the data source.

Second, hardware has become much more cost-efficient. Companies can now deploy relatively affordable edge infrastructure that runs advanced software workloads, which previously required expensive cloud computing resources. This helps businesses control costs while still maintaining high performance and reliability.

Another major reason for edge adoption is the rapid growth of artificial intelligence. AI doesn’t replace cloud computing — instead, it works together with it. In most real-world scenarios, large AI models are trained in the cloud, where massive computing power is available. But once those models are trained, they can be optimized and deployed on edge devices for real-time inference. This speeds decision-making, reduces latency, enhances privacy, and enables systems to keep operating even with limited connectivity.

In the following sections, we’ll explain what edge computing is, how it works, the benefits it offers, and how it supports technologies such as IoT, 5G, AI, and autonomous systems.

Introduction: What Is Edge Computing?

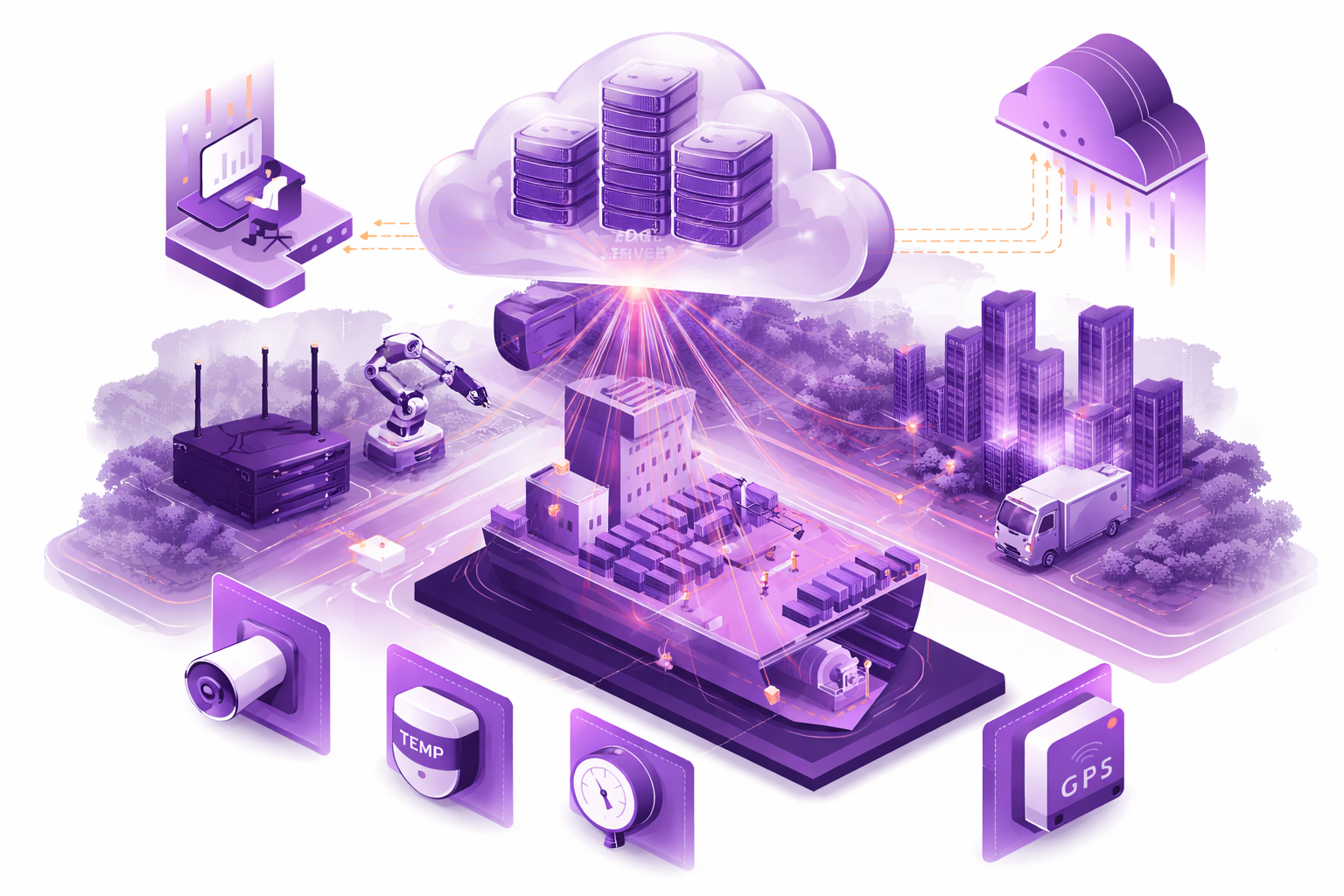

Edge computing is a distributed computing model in which data is processed at or near the point of generation — such as on local devices, gateways, micro data centers, or edge servers — rather than sending everything to centralized cloud infrastructure.

This approach is especially important in environments where milliseconds matter, data volumes are very large, and quick decisions directly affect safety or operational outcomes.

The cloud still plays an important role, but it is not responsible for everything. Instead, the cloud supports the system by handling centralized analytics, long-term storage, reporting, and regulatory needs.

In this setup, only processed and aggregated data is sent to the cloud. Sensitive raw data remains local. This improves security, reduces bandwidth usage, and allows systems to continue operating even during network interruptions.

This hybrid edge–cloud model demonstrates the real value of edge computing: time-sensitive and critical tasks are handled locally, while the cloud provides scalability, orchestration, and deeper analysis. Together, they create a system in which each workload runs in the most suitable location from both technical and economic perspectives.

Ready to explore how to bring the Cloud experience to the Edge in your project?

How Edge Computing Works?

At its core, edge computing moves computing power closer to the “edge” of the network — meaning closer to sensors, machines, users, and devices that generate data. By doing this, systems reduce latency, avoid unnecessary data transfers, and enable real-time decisions where events occur.

However, when we talk about distributed architecture in the context of edge computing, it’s important to clarify what that really means.

Today, many edge deployments are still relatively simple. Often, an edge setup consists of a single on-site edge server or device, with basic replication to another device for backup. While this already provides significant advantages compared to cloud-only systems, it is only the first stage of edge development.

“Fully distributed edge architectures — where multiple edge nodes coordinate, share workloads, and function as a unified system — are still not very common. This is because building reliable, synchronized, and secure distributed software for constrained edge hardware and unstable networks is complex. As a result, this area remains one of the least developed parts of the edge computing market.”

Ivan Shulak, Technical Project Manager, Solutions Architect, ESL

Edge computing does not replace cloud computing. Instead, it changes the role of the cloud. The cloud remains ideal for centralized orchestration, large-scale analytics, long-term data storage, and AI model training. The edge, especially when built as a distributed system, focuses on real-time execution, local intelligence, and operational continuity. Together, they form a hybrid architecture in which each layer performs the tasks for which it is best suited.

Edge Components and Data Processing

Edge infrastructure typically includes IoT devices, industrial sensors, edge gateways, and servers that run containerized applications or AI workloads. These devices collect raw data, process it locally, filter or aggregate it, and sometimes take immediate action — for example, shutting down machinery during a malfunction or adjusting temperature settings in a smart building.

Traditionally, edge systems were considered small-scale — typically involving a single server or a limited number of devices running independent workloads. However, this is changing.

One of the most important trends in edge computing is edge clustering. Instead of relying on a single device, multiple edge devices can be combined into one logical compute cluster. This allows workloads to be distributed across several nodes, similar to how cloud environments operate.

By using orchestration technologies, containerized applications can run across multiple edge nodes simultaneously. If additional computing power is needed, new devices can be connected to the network and added to the cluster. This approach makes edge scaling much more practical than it was in the past.

In distributed edge environments, nodes still process sensor data locally, normalize high-frequency streams, and run AI inference models. The key difference is that nodes can coordinate with each other — sharing workloads, synchronizing state, and maintaining system availability even if individual nodes fail.

Because logic and coordination remain local, systems can continue operating even if connectivity to the cloud is temporarily lost. This level of autonomy is especially important in healthcare, industrial automation, defense, and research applications.

Why Edge Computing Matters?

Edge computing is becoming essential as the number of connected devices continues to grow and more applications require real-time response.

One of its main advantages is low latency. In systems like autonomous vehicles, robotics, or patient monitoring, delays caused by sending data to the cloud are unacceptable. Processing data locally eliminates this delay.

Edge computing also improves data sovereignty. Sensitive information can stay on-site, helping organizations comply with strict regulations in industries such as healthcare, finance, and manufacturing. Companies gain more control over how and where their data is stored and accessed.

Another important benefit is efficiency. By filtering and aggregating data locally, organizations significantly reduce the amount of data that must be transmitted to the cloud. This lowers bandwidth costs and reduces the load on central servers, making systems more scalable and sustainable.

Edge Computing Use Case

A real-world example of edge computing can be seen in a laboratory research project in the United States, where edge infrastructure supported live pharmaceutical experiments on animals.

In this case, the laboratory itself functioned as the edge.

During experiments, animals were connected to multiple biometric and biochemical sensors measuring heart rate, temperature, oxygen saturation, respiratory indicators, blood gas levels, and other critical parameters. Up to 50 different metrics were monitored simultaneously, with data updates arriving as frequently as 100 times per second. This produced a constant high-volume data stream that could not tolerate delays.

Instead of sending all raw data to the cloud, it was processed locally on an edge device powered by NVIDIA Orin NX hardware. This device included high-performance CPUs and GPUs, NVMe storage, and enough memory to handle real-time analytics.

The edge system aggregated and normalized sensor data and continuously evaluated incoming signals. If abnormal patterns or dangerous conditions were detected, the system immediately generated alerts and recommendations for the laboratory staff. This allowed fast medical intervention or, if necessary, termination of the experiment to protect the animal.

Artificial intelligence was used in this setup in a specific way. AI models were trained in the cloud, where sufficient compute resources were available. After training, the models were deployed to the edge device to perform real-time inference — detecting anomalies and supporting decision-making inside the laboratory.

Edge vs. Cloud Computing

Organizations often ask when they should choose edge computing instead of cloud computing. The answer depends on the workload and its specific requirements.

Edge computing is best suited for real-time tasks, low-latency operations, high-reliability environments, and handling sensitive data locally. Running compute-intensive workloads at the edge can reduce cloud costs, especially for continuous, high-frequency processing. Edge systems also provide faster response times and help meet regulatory requirements.

Cloud computing remains superior for large-scale analytics, centralized storage, AI model training, and workloads that do not require immediate responses. Cloud object storage services provide very low-cost long-term storage, which would be difficult and expensive to replicate entirely on edge infrastructure.

Most organizations use a hybrid approach. In this model, real-time processing and AI inference occur at the edge, while aggregated data, backups, and disaster recovery storage are managed in the cloud. This balances performance, cost, and resilience.

In simple terms: urgent and compute-heavy tasks run at the edge, while storage, training, and large-scale analytics stay in the cloud.

Ready to explore how to bring the Cloud experience to the Edge in your project?

Edge Computing and Emerging Technologies

Edge computing works closely with several emerging technologies.

Edge AI allows machine learning models to run directly on devices, enabling real-time object detection and predictive intelligence.

5G networks expand edge capabilities by improving bandwidth and reducing latency.

Fog computing introduces intermediate layers between edge devices and the cloud, helping coordinate data processing across distributed environments.

Together, these technologies create a system where intelligence is distributed across devices, edge nodes, and cloud platforms.

Challenges and Deployment Considerations

Deploying edge computing at scale presents unique challenges.

Organizations must manage distributed infrastructure across multiple locations, secure data as it moves between devices and cloud systems, and protect hardware from cyber threats.

Handling large data volumes requires careful planning around storage, redundancy, and network availability. Since edge sites may operate in environments with unreliable connectivity, systems must be designed to continue functioning even when connectivity is unavailable.

Successful edge deployments depend on proper infrastructure planning — selecting suitable hardware, integrating with cloud platforms, and ensuring applications can be remotely deployed and updated across distributed nodes.

Conclusion

Edge computing doesn’t replace the cloud — it completes it. By processing data where it’s created, organizations reduce latency, keep sensitive information on-site, lower bandwidth costs, and run real-time AI inference even when connectivity is unreliable.

The most effective approach is a hybrid edge–cloud architecture: time-critical workloads execute locally, while the cloud handles orchestration, long-term storage, analytics, and model training. If you’re planning an edge deployment (or want to evolve from a single device to a resilient edge cluster), Edge Solutions Lab can help you design the right architecture and take it from concept to production — so you get “cloud-like” scalability and operations at the edge without the fragility.

Frequently Asked Questions

Why is edge computing important for real-time data processing?

Edge computing enables real-time data processing and analysis at the network’s edge. When data is generated at remote sites, edge solutions enable quick responses, reduce bandwidth consumption to the public cloud, and improve performance for time-sensitive edge applications.

Why is edge computing important for real-time data processing?

Edge computing enables real-time data processing and analysis at the network’s edge. When data is generated at remote sites, edge solutions enable quick responses, reduce bandwidth consumption to the public cloud, and improve performance for time-sensitive edge applications.

What are common use cases of edge computing and edge solutions?

Edge computing use cases include industrial IoT, autonomous vehicles, retail analytics, and smart cities. Edge computing enables local processing and storage of data at the edge, reducing the volume of data sent to cloud providers and enhancing reliability.

How do cloud and edge computing work together in hybrid cloud setups?

Cloud and edge computing complement each other: hybrid cloud uses cloud servers for central analytics, while edge data processing handles immediate filtering and processing. Edge and cloud computing together support scalable computing infrastructure and advanced data analysis.

What benefits do businesses get from using edge computing solutions?

Edge computing offers lower latency, reduced bandwidth costs, improved privacy, and resilience. Edge computing enables faster decision-making by processing and storing data locally, making edge strategies ideal for data generated in distributed locations.

What should organizations consider when adopting edge computing infrastructure?

Consider edge computing infrastructure, security, management across edge network and cloud providers, and integration with existing systems. Learning about edge services and edge computing technology helps plan deployments, monitor edge computing initiatives, and anticipate their future.