Edge–Cloud Convergence: How AI, Edge Computing, and the Cloud Are Transforming the Future of IoT

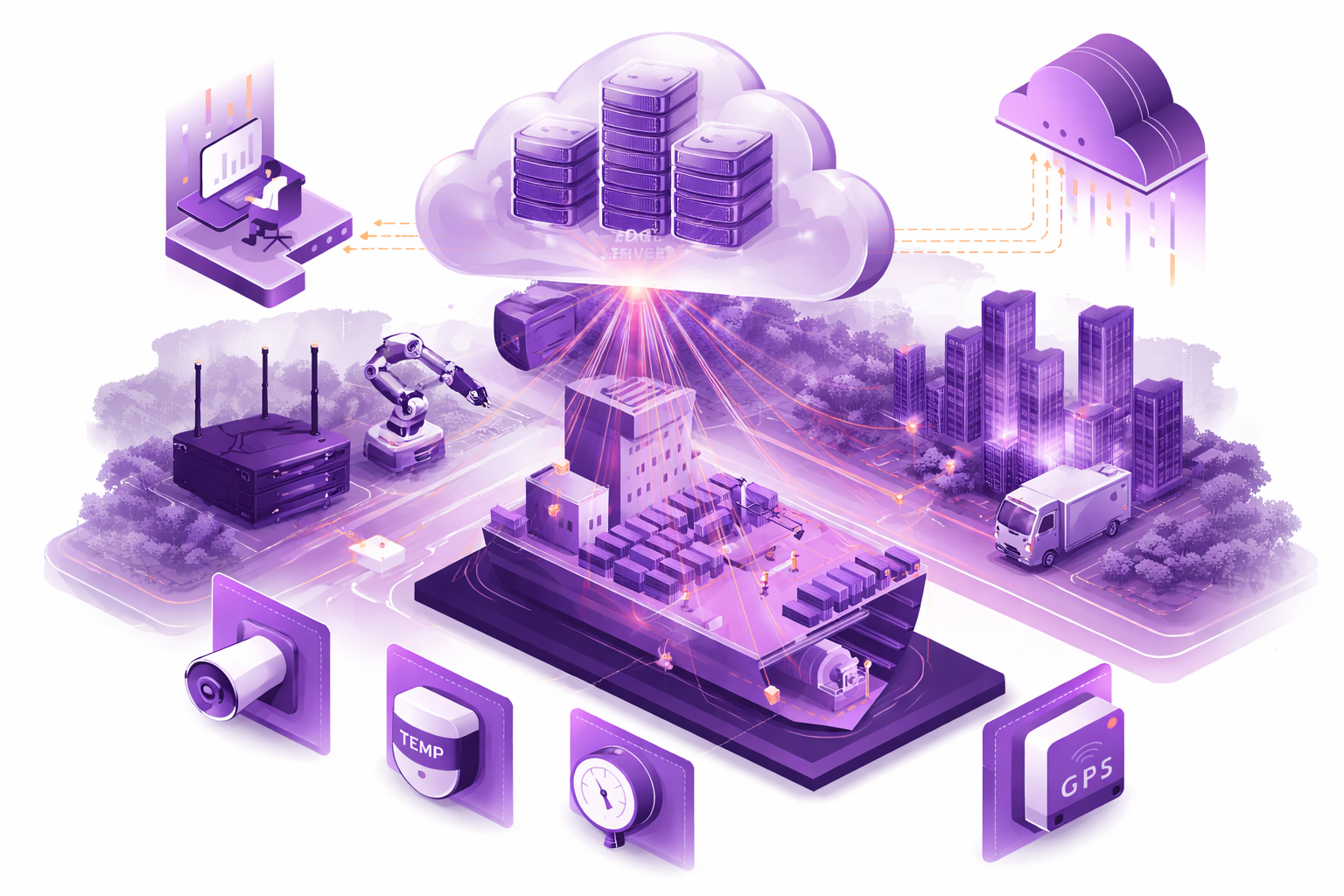

Edge–cloud convergence is reshaping how businesses build, scale, and secure digital systems. In simple terms, it combines the power of cloud computing with the speed of edge computing, enabling organisations to run AI-driven IoT solutions with real-time responsiveness.

This shift is becoming especially important as more companies modernise their operations with connected devices and intelligent automation. At Edge Solutions Lab, we see this transformation happening every day across client projects — from early hardware prototyping to full hardware–software integration.

Introduction

Edge–cloud convergence brings computing power closer to where data is actually generated, instead of relying only on a centralized cloud. The result is faster response times, reduced latency, and improved reliability — three factors that are now essential for scaling IoT and AI.

As enterprises deploy thousands of connected devices, the traditional approach of sending all data to the cloud simply stops working. Bandwidth costs grow, cybersecurity risks increase, and regulatory pressure around data privacy becomes stronger. All of this is accelerating the shift toward distributed, hybrid architectures.

This shift also changes how companies think about the cloud itself. Instead of being a remote destination where everything runs, the cloud becomes a flexible orchestration layer that manages compute resources across both edge and cloud environments.

In this model, cloud platforms, edge devices, and AI systems form a unified ecosystem — one that can adapt to real-world conditions instead of fighting against them.

Ready to explore how to bring the Cloud experience to the Edge in your project?

Why Edge–Cloud Convergence Matters for AI and IoT? Limitations of Traditional Cloud-Only Architectures

Cloud computing remains a strong foundation for modern digital systems, but cloud-only architectures start to show clear limitations at scale — especially in IoT- and AI-heavy environments.

The first limitation is response time. When data has to travel from devices or sensors to a remote cloud data center and back, delays are unavoidable. Even small amounts of latency can break real-time workflows, disrupt control loops, or reduce the effectiveness of automated decisions. In many IoT scenarios, waiting for a full round trip to the cloud simply isn’t acceptable.

The second limitation is cost. The pay-as-you-go model works well for bursty or unpredictable workloads, but it becomes expensive when large volumes of data are generated continuously. In cloud-only setups, raw data has to be transmitted, stored, and processed centrally. As a result, organisations pay not only for compute resources, but also for data transfer, ingestion, and long-term storage. As data volumes grow, these costs can quickly exceed initial expectations.

Physical distance and connectivity are other major factors. Cloud data centers are, by definition, remote. In environments with unstable or limited connectivity — such as industrial sites, remote locations, or mobile deployments — relying solely on the cloud introduces fragility. When connectivity drops, visibility and control can disappear entirely.

Edge–cloud convergence addresses these constraints by redistributing data processing and workload execution.

Instead of sending everything to the cloud, a significant portion of computation is moved closer to the source — onto edge devices equipped with CPUs, GPUs, or eGPUs.

- At the edge, data can be:

- consumed

- filtered

- merged

- aggregated

Compute-heavy operations such as feature extraction, statistical aggregation, anomaly detection, and even map-reduce–style processing can run directly on edge hardware. This dramatically reduces the volume of data that needs to be transmitted upstream.

For AI workloads, this model is especially powerful. Inference can run directly on edge GPUs, detecting anomalies, patterns, or critical events in real time. Only processed results — summaries, peaks, alerts, or meaningful insights — are sent to the cloud for further analysis or coordination.

The same approach applies to heavy data, such as video. Instead of streaming raw video continuously to the cloud, edge devices can analyze it locally, compress it, or send only relevant segments that require attention. This significantly reduces bandwidth usage while preserving operational visibility.

In practice, edge–cloud convergence transforms the cloud from a destination for raw data into a coordination and intelligence layer. The edge handles high-volume, time-sensitive processing, while the cloud focuses on orchestration, cross-site analytics, model training, and long-term insights.

The result: faster systems, lower costs, and architectures that scale much more predictably.

How Convergence Improves Data Processing

Edge computing reduces bottlenecks by processing data locally before sending only relevant information to the cloud.

When data is processed close to the source — often within milliseconds — latency drops significantly. At the same time, organisations gain better operational continuity, even when network connectivity is inconsistent. This is especially critical in sectors like industrial IoT and healthcare, where delays are simply not acceptable.

By moving inference, filtering, and initial analytics to the edge, companies also reduce the amount of data they need to send to the cloud. This lowers bandwidth consumption and reduces cloud processing costs.

In practice, this means that heavy data from sensors and devices is pre-processed at the edge, and only essential insights are transmitted to the cloud for deeper analysis.

AI’s Fundamental Role in the Convergence

Artificial intelligence is one of the key forces accelerating edge–cloud convergence.

The pace of AI development is extremely fast, driven by massive investments and strong competition, resulting in the creation of highly capable models. Today, AI systems can handle a wide range of tasks — from advanced data analysis and video processing to complex safety scenarios, such as predicting collisions between people and heavy machinery in industrial environments.

At the same time, most modern AI models are relatively generic by default. To deliver real business value, they need to be adapted and fine-tuned for specific use cases, environments, and datasets. This is where the difference between cloud and edge becomes important.

Training and fine-tuning AI models require large datasets and significant computing power. Video datasets, image collections, and large volumes of historical data are typically too heavy to process on edge devices. That’s why the cloud remains the natural environment for training and refining models. In the cloud, models can be trained, validated, tested, and iterated efficiently using scalable compute infrastructure.

Once a model is trained and optimized, the edge comes into play. Modern edge hardware supports inference engines that allow AI models to run directly on edge devices. These models can perform real-time inference close to where data is generated, acting immediately on fresh inputs without depending on constant cloud connectivity.

This is where real convergence happens in practice. Models are trained in the cloud, then compressed, optimized, and deployed to the edge. At the edge, they handle tasks like anomaly detection, predictive maintenance, video analytics, and real-time decision-making — exactly where latency and connectivity constraints make cloud-only processing impractical.

Running AI at the edge also reduces the need to send large volumes of raw data to the cloud. Instead of streaming everything, edge systems generate insights locally and send only relevant events or summaries upstream. This improves responsiveness, lowers costs, and reduces load on cloud infrastructure.

In this model, cloud AI, edge AI, and hybrid inference all work together as part of a single adaptive system. The cloud handles heavy computation and coordination, while the edge delivers speed, autonomy, and efficient execution.

Ready to explore how to bring the Cloud experience to the Edge in your project?

The Future of Edge–Cloud–AI Convergence

The future of edge–cloud–AI convergence is moving toward a world of hyper-distributed intelligence, where computing and decision-making are no longer centralized but distributed across devices, edge systems, and cloud platforms.

- Instead of relying on a single central system, organisations will operate ecosystems where:

- devices collect data

- edge systems react in real time

- the cloud handles global coordination and analytics

A major driver of this shift will be 5G and, later, 6G networks. These technologies will enable faster, more reliable communication between devices and systems, making it easier to run distributed AI workloads at scale.

At the same time, the industry is moving toward standardization. Today, fragmentation — different hardware, software stacks, and protocols — makes edge systems hard to scale. As standards for APIs, security, and orchestration evolve, building distributed systems will become much simpler and more predictable.

Another key shift is toward autonomous infrastructure. Future edge systems will increasingly become self-optimizing and self-healing. They will monitor their own performance, rebalance workloads, detect failures early, and recover automatically with minimal human involvement. This will make distributed systems far more resilient and easier to manage.

Importantly, this evolution strengthens the relationship between edge and cloud rather than replacing one with the other. The cloud becomes the coordination layer, managing models, devices, and global visibility, while the edge handles local execution and real-time decisions.

AI will sit at the center of this convergence. More intelligence will run directly at the edge, enabling faster and more context-aware decisions. At the same time, the cloud will continue to handle training, retraining, and lifecycle management of models.

This balance creates a more adaptive computing model, where intelligence is distributed based on performance, cost, and operational needs.

These advances will drive adoption across industries — from manufacturing and healthcare to energy, logistics, and smart infrastructure — making systems more responsive, scalable, and practical.

At the same time, this shift supports more sustainable computing. By processing data where it is needed instead of moving everything centrally, organisations reduce unnecessary data transfer and make more efficient use of resources.

In short, this is not just about faster technology — it’s about building smarter, more autonomous, and more adaptable systems.

Conclusion

Edge–cloud convergence is becoming a foundational strategy for modern IoT and AI systems.

- By distributing compute, processing data locally, and using the cloud for coordination and large-scale tasks, businesses gain:

- faster response times

- lower latency

- greater resilience

For organisations preparing for the next wave of digital transformation, combining edge and cloud is no longer just an advantage — it’s a competitive necessity.

With the right partner — from hardware design to full system integration — this journey becomes much more manageable.

If you’re exploring edge solutions, Edge Solutions Lab supports end-to-end development — from device engineering to cloud orchestration.

Frequently Asked Questions

What is edge computing and how does it differ from cloud computing?

Edge computing processes data closer to where it is generated — on devices or local systems — instead of sending everything to centralized cloud servers. Cloud computing provides large-scale compute and storage, while edge focuses on speed, latency reduction, and real-time response. In practice, workloads are split between edge and cloud.

Why is edge–cloud convergence important for IoT?

IoT devices generate massive amounts of data. Edge computing processes and filters this data locally, while the cloud provides scalability and analytics. Together, they ensure efficient bandwidth use and fast response times.

How do edge AI and cloud computing work together?

Edge AI runs inference locally for real-time decisions, while the cloud handles model training, updates, and large-scale learning. This creates a hybrid system that is both fast and continuously improving.

What applications benefit most from this approach?

Industrial automation, autonomous vehicles, health monitoring, smart cities, AR/VR—basically anything where response time, autonomy, and scalability are critical.

How do security and privacy change?

Edge improves privacy by keeping data local, but increases complexity because security must be managed across many devices.

What infrastructure is required?

Edge devices, connectivity, orchestration tools, and cloud infrastructure — all working together.

What challenges remain?

Complex orchestration, data consistency, network reliability, and managing large fleets of devices.