Benefits of Edge Computing — How Processing at the Edge Transforms Real-Time Systems

Edge computing allows organizations to process and analyze data closer to its source, significantly reducing response times. For enterprises developing real-time systems, this can mean the difference between latency-related failures and smooth, reliable operations.

In this article, we’ll look at the benefits of edge computing and how processing at the edge transforms real-time systems — giving business leaders a practical view of when, why, and how to adopt it.

What is edge computing — and why real-time systems demand it

Understanding edge computing

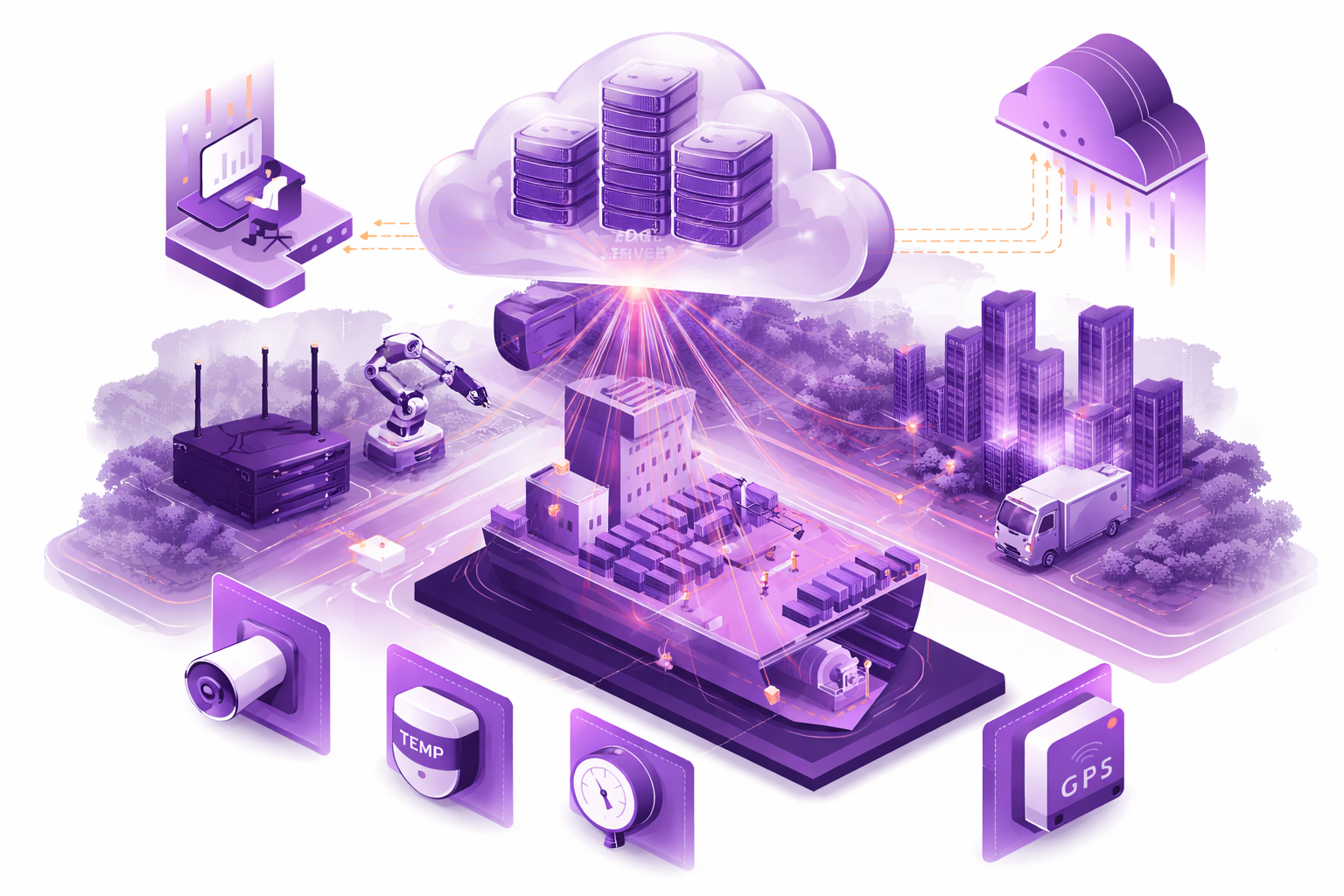

Edge computing is a distributed computing model in which processing occurs on or near the devices that generate data, rather than relying solely on remote, centralized cloud servers or distant data centers. In practice, edge devices — such as gateways, embedded systems, micro data centers, or industrial gateways — handle compute tasks locally. That reduces the need to send all data to a central data center or cloud infrastructure for processing.

For businesses going through digital transformation, edge computing works alongside traditional cloud computing rather than replacing it. By shifting part of the workload to edge devices, companies can reduce pressure on cloud services, cut bandwidth consumption, and enable immediate local decisions.

Why real-time demands push computing closer to the source

When systems need to respond in real time — for example, in industrial automation, autonomous vehicles, or live sensor networks — even a few hundred milliseconds of latency can become a serious problem. Sending sensor data across the internet to a distant server, waiting for it to be processed, and then returning the result introduces both delay and unpredictability.

By using computing at the edge of the network, organizations make sure data is processed immediately where it originates. This minimizes round-trip delays and supports real-time decision-making. For any business relying on real-time analytics, control loops, or critical automation, that kind of responsiveness is essential.

Ready to explore how to bring the Cloud experience to the Edge in your project?

Key Benefits of Edge Computing

Reduced Latency and Faster Response Times

One of the clearest and most valuable benefits of edge computing is the dramatic reduction in latency. When data is processed closer to where it’s generated, response times drop significantly — often by orders of magnitude compared to cloud-based processing.

In edge-based systems, compute modules are deployed in the same local network and at the same physical location as sensors, drones, cameras, industrial machines, or other IoT devices. That means data no longer has to travel over the public internet, through remote data centers, site-to-site VPNs, or dedicated cloud connections before a decision can be made.

As a result, the full available network bandwidth is used locally and much more efficiently. There are no long network hops, no unpredictable routing delays, and no dependency on external connectivity for real-time operations. In many cases, this reduces response times from hundreds of milliseconds to just a few milliseconds — or even less, depending on the setup.

For real-time systems, that difference is critical. In industrial automation, robotics, autonomous vehicles, or drone operations, even small delays can lead to unstable control loops, degraded performance, or full system failure. Edge computing removes those delays by ensuring decisions are made directly at the edge, exactly where events occur.

This local processing model enables true real-time decision-making, even for demanding workloads involving high-frequency sensor data or continuous data streams. Systems can react immediately to changes in their environment rather than waiting for a round-trip to a remote cloud server.

In short, by moving computation to the edge, organizations remove unnecessary network delays. Response times shrink dramatically, system behavior becomes more predictable, and real-time applications can operate reliably at full performance.

Bandwidth Optimization and Cost Reduction

Many modern systems generate enormous volumes of data. Sensors, cameras, industrial equipment, and IoT devices can produce continuous, high-frequency data streams. Sending all of that raw data directly to a centralized cloud infrastructure quickly becomes expensive and inefficient. Edge computing addresses this by processing and filtering data locally, so only meaningful, aggregated, or pre-analyzed information is sent onward.

Cloud providers do offer ways to improve connectivity between edge locations and the cloud, but those solutions come at a cost. In practice, there are several common ways to connect an edge location to cloud infrastructure, and each comes with its own trade-offs.

The most basic option is connectivity over the public internet. In that model, data travels from the edge location through public gateways and internet provider routes before reaching a cloud data center. While this approach is relatively simple and inexpensive to get started with, performance and latency are unpredictable. Network paths can vary a lot, sometimes involving many intermediate hops, which introduces variability in both speed and reliability.

A more advanced option is a site-to-site VPN. Here, a dedicated encrypted tunnel connects the edge location directly to cloud resources. This improves security and reserves a portion of bandwidth for communication. Even so, it still relies on standard network capacity and doesn’t eliminate bandwidth limitations or ongoing data transfer costs.

The most performant — and the most expensive — option is a direct connection service such as AWS Direct Connect. In this setup, cloud providers establish dedicated backbone connections with internet service providers, allowing edge locations to connect directly to cloud data centers with guaranteed bandwidth. These connections can support very high throughput, from gigabits to tens or even hundreds of gigabits per second. But that level of performance comes with major upfront costs, long-term contracts, and limited flexibility. In most cases, such solutions only make sense for specific scenarios like large one-time data migrations or highly regulated environments.

This is where edge computing fundamentally changes the economics. When significant compute capacity is available at the edge, there is often no need to continuously stream large volumes of raw data to the cloud at all. Instead, data can be processed locally, and only compressed, aggregated, or event-based results are sent upstream. This dramatically reduces bandwidth requirements and removes the need for costly high-throughput cloud connectivity.

Edge-based architectures also offer greater control over the local network. Bandwidth can be segmented and prioritized for specific sensors, devices, or data streams that generate especially high volumes of data. If local network capacity becomes a bottleneck, it can usually be expanded directly on-site by upgrading switches, routers, or internal links — often at a much lower cost and with more flexibility than provisioning new cloud connectivity services.

Unlike cloud networking solutions that require upfront commitments, contracts, and long-term capacity planning, local network expansion is usually more adaptable. Organizations that already operate and maintain on-site infrastructure can often treat network upgrades as incremental operational tasks rather than major strategic investments.

In short, edge computing shifts cost optimization away from expensive, always-on data transfer pipelines and toward local processing and intelligent data reduction. By minimizing unnecessary data movement, companies lower bandwidth costs, reduce cloud storage and transfer fees, and build systems that scale more predictably and economically over time.

Improved Reliability and Local Autonomy

Edge computing also significantly improves system reliability and operational autonomy, especially in complex systems made up of multiple subsystems. In practice, overall system reliability is not additive — it is multiplicative. If each subsystem has a reliability of 99%, a system made up of three such components already drops to roughly 97% total availability. Once additional dependencies are introduced — such as remote cloud services, network providers, or inter-regional connectivity — overall reliability drops even further.

For many businesses, a few percentage points of availability loss translate into hours of downtime each year. More importantly, that downtime rarely appears as one long outage. More often, it shows up as frequent, short interruptions that continuously disrupt business processes. In real-time or mission-critical systems, even milliseconds of failure can have serious consequences. In some environments, a delay of just a few milliseconds can cause physical damage, safety incidents, or irreversible operational errors.

By shifting critical computation to the edge, organizations remove several external dependencies from the execution path. Edge systems can operate independently of wide-area networks and centralized cloud services, which allows them to continue functioning even when connectivity is degraded or temporarily unavailable. Local edge servers and devices can process data autonomously, maintain control loops, and store results until connectivity is restored.

This kind of local autonomy is especially important in environments with limited or unreliable connectivity. A strong example comes from underground mining operations. Many mines operate in air-gapped or connectivity-constrained environments, yet they still require continuous, reliable monitoring to protect human life. In deep underground coal mines, the sudden release of methane or other hazardous gases is a constant risk. In those situations, immediate reaction is critical.

In such environments, gas sensors are deployed throughout the mine, while workers wear biometric monitoring devices that continuously track physiological indicators. Intermediate edge compute modules are installed along the length of the mine — sometimes spanning kilometers underground. When gas sensors detect dangerous conditions, the system processes this data locally and immediately sends alerts directly to workers in affected zones, warning them to evacuate or avoid entering hazardous areas. That decision-making happens entirely at the edge, without relying on external networks or cloud connectivity.

Edge Solutions Lab has designed and tested such systems end to end — from custom wearable devices and edge compute modules to local networking and software platforms that coordinate real-time responses. Standard industrial gas sensors were integrated as part of the solution, while custom wearable devices were developed to collect over 50 biometric parameters at high frequency, enabling accurate and timely detection of dangerous conditions. This architecture ensures that even during network outages, the system continues operating and protecting lives.

In short, edge computing improves reliability by reducing external dependencies and enables local autonomy that allows systems to function predictably and safely — even in the most demanding environments.

Enhanced Data Privacy and Security

Edge computing also offers meaningful advantages in data privacy and security. Sending sensitive data to centralized cloud infrastructure increases exposure and introduces compliance risks, especially in regulated industries. By processing and storing data locally, edge architectures significantly reduce the amount of sensitive information that must travel across public or external networks.

Modern edge hardware platforms already provide strong security foundations. Many compute modules support full-disk encryption and secure boot mechanisms, allowing data stored on edge devices to remain encrypted at rest. However, unlike cloud environments — where many security mechanisms are pre-built and centrally managed — edge security must be implemented and maintained across many distributed devices.

That means building secure edge systems often requires more upfront effort than deploying workloads in the cloud. Each device needs to be properly configured, encrypted, monitored, and updated. Standardized approaches to encryption, key management, device authentication, and security monitoring are essential for maintaining consistency and reducing operational risk.

While this can feel complex for organizations starting from scratch, it becomes far more manageable with experience. Edge Solutions Lab has built multiple secure edge systems in regulated and mission-critical domains, developing repeatable security architectures that balance strong protection with reasonable implementation costs. By applying proven encryption standards, device hardening practices, and monitoring mechanisms, edge environments can achieve high levels of security without excessive overhead.

Keeping sensitive data close to its source also makes regulatory compliance easier. Industries subject to strict data-protection laws — such as healthcare, industrial safety, energy, and defense — benefit from reduced data exposure and clearer control over where data is stored and processed. Only processed, compressed, or non-sensitive data needs to be transmitted to centralized systems for reporting or long-term storage.

In practice, edge computing does not remove the need for security investment — it reshapes it. Instead of relying entirely on cloud providers, organizations take direct ownership of data protection at the device and site level. When done well, this results in systems that are both more secure and better aligned with privacy and compliance requirements.

Ready to explore how to bring the Cloud experience to the Edge in your project?

Challenges and Drawbacks of Edge Computing

Despite its clear advantages, edge computing introduces a new set of challenges that organizations need to address when moving compute closer to the physical world. Many of these challenges are not theoretical — they come directly from real-world deployments and operational experience.

Operational and Deployment Complexity

One of the biggest challenges in modern edge computing is clustering and orchestration of edge compute resources. While the market offers a wide range of edge servers and devices, far fewer solutions address how to combine multiple edge nodes into a single, coordinated system.

Many real-world workloads cannot be handled efficiently by a single edge device. They need either more compute power than one node can provide or parallel execution across several devices. That makes clustering a necessity rather than just an optimization. At the same time, building and managing such clusters is complex — especially for end users who are not deeply experienced in distributed systems. Creating a reliable, production-ready edge cluster that can scale, recover from failures, and operate autonomously remains one of the least mature areas in the edge ecosystem.

Operational complexity goes beyond clustering. Unlike cloud environments — where infrastructure management, updates, and orchestration are largely abstracted away — edge systems have to be managed directly in the field. Updating applications while maintaining backward compatibility with existing sensors, rolling out new versions without downtime, and ensuring continuous operation during upgrades are all non-trivial tasks. In many cases, systems simply cannot afford to go offline, even briefly, during updates.

Hardware replacement adds another layer of complexity. When edge devices fail, especially in IoT-heavy environments, they have to rejoin the cluster, synchronize state, replicate data if needed, and immediately resume their role in the system. Coordinating that process reliably across many locations creates additional operational overhead.

Harsh Environmental Conditions and Hardware Constraints

Edge devices operate in environments that are fundamentally different from cloud data centers. While cloud providers maintain tightly controlled, almost sterile conditions — with regulated temperatures, humidity control, and constant monitoring — edge hardware is often deployed in harsh and unpredictable environments.

Examples include underground mines with high levels of dust and humidity, offshore installations exposed to saltwater and corrosion, or remote industrial sites with limited physical protection. In these kinds of settings, edge devices may be mounted directly to walls, exposed to vibration, debris, or even physical impact. Overheating, dust accumulation, and component degradation are all very real risks.

Because of that, edge systems need to be designed with reliability, high availability, and fault tolerance as core requirements from the start. That includes redundant components, failover strategies, and distributed execution models that allow systems to continue operating even when individual devices fail. Designing such systems correctly is a serious engineering challenge and cannot be treated as an afterthought.

Security and Data Protection Concerns

While local data processing can improve privacy, securing a large number of distributed edge devices is inherently more complex than securing centralized cloud infrastructure. Each edge node has to be hardened, encrypted, monitored, and updated regularly. Security is no longer centralized — it becomes a responsibility at every physical location.

Unlike cloud providers, which have spent years developing standardized security frameworks, organizations deploying edge systems must build their own consistent approaches to encryption, authentication, monitoring, and compliance across all devices. Without careful design, this can lead to inconsistent configurations and a larger attack surface.

Potential Fragmentation and Integration Issues

Edge environments are often heterogeneous by nature. Devices from multiple vendors, different hardware architectures, varied operating systems, and inconsistent configurations can quickly create fragmentation. Without strong standardization and orchestration, that diversity becomes hard to manage and expensive to scale.

If not addressed early, fragmented edge deployments can turn into long-term maintenance burdens — difficult to upgrade, hard to integrate, and costly to operate over time.

Conclusion

Edge computing offers powerful benefits — from reduced latency and faster response times to bandwidth optimization, reliability, and stronger data privacy. For real-time systems, processing at the edge often isn’t just an option — it’s a necessity.

At the same time, edge computing introduces complexity: distributed deployments, hardware management, security challenges, and integration overhead. The best results usually come when edge and cloud infrastructure work together in harmony.

For enterprises ready to embrace real-time applications — industrial automation, autonomous systems, IoT networks, or smart infrastructure — edge computing is a strategic choice that transforms how data is processed, analyzed, and acted on.

If you’re exploring edge solutions or looking to design an edge-enabled platform, reach out to our software design and development team — we help build edge-ready systems from the ground up.

Frequently Asked Questions

What latency improvements can I realistically expect with edge computing?

With edge computing, latency can drop from hundreds of milliseconds to single-digit milliseconds, depending on network conditions and how close the edge is to the data source. For real-time control systems, that often turns delayed response into near-instant action.

Does edge computing replace cloud computing completely?

Not at all. Edge computing complements centralized cloud rather than replacing it. The cloud remains essential for heavy analytics, global coordination, long-term storage, and batch processing.

Are all workloads suitable for processing at the edge?

No. Workloads that require heavy compute power, large-scale data analysis, or long-term storage are often still better suited for centralized cloud or data center environments. Edge is ideal for latency-sensitive, real-time, and data-privacy-sensitive tasks.

What are the security risks associated with distributed edge infrastructure?

Each edge node introduces a potential vulnerability: device tampering, outdated firmware, unsecured network links, or inconsistent configurations. Strong encryption, patch management, and consistent security policy enforcement are essential.

How complicated is managing many edge devices across locations?

It can be quite challenging. Distributed deployments require remote monitoring, maintenance automation, centralized logging, and scalable orchestration. Without the right tooling, operational overhead can increase quickly.

Can edge computing reduce operational costs?

Yes — by lowering data transfer costs, reducing cloud storage needs, and optimizing bandwidth usage. At the same time, hardware acquisition, maintenance, and management costs still need to be factored into the overall ROI.

Is edge computing compliant with data-privacy regulations such as GDPR?

Edge computing can help meet privacy requirements by keeping sensitive data local, limiting transfer to the cloud, and reducing exposure. But compliance still depends on secure storage, access control, and making sure data handling meets all relevant regulations.